Picking a Google News keyword pipeline means choosing custody, pricing shape, and maintenance appetite—not just whoever brands themselves the fastest Google News scraper. This comparison aligns hosted SERP APIs, marketplace actors on Apify, visual cloud templates, and UScraper’s Google News Keyword Scraper export so you can scrape, extract, export, or collect headline rows without surprise procurement gaps.

Landscape

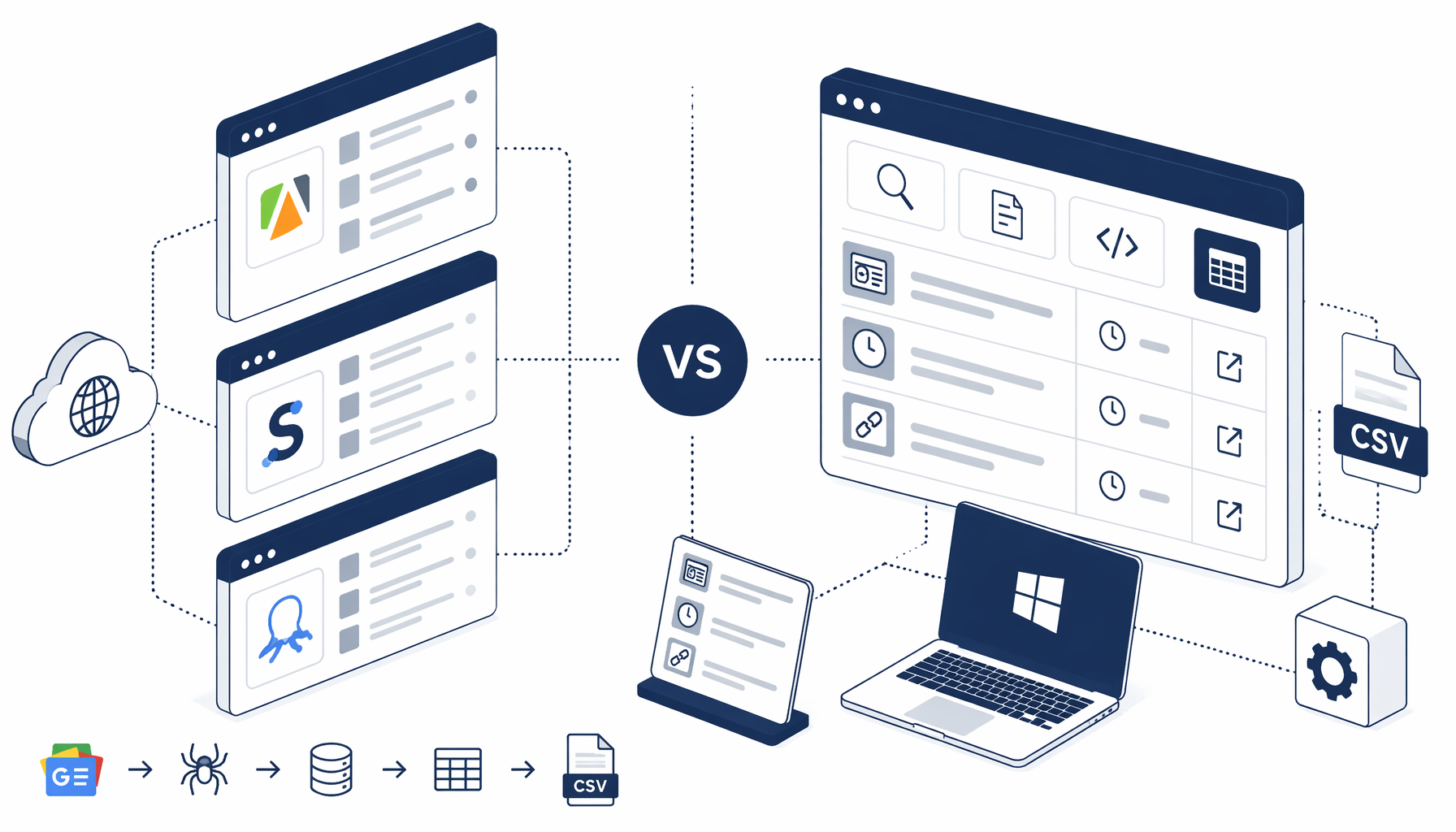

Four families appear in nearly every google news scraping tools search

- Hosted Google News SERP APIs deliver JSON contracts with egress abstracted away—canonical examples include SerpApi Google News, Bright Data Google News SERP API, and Oxylabs Google News Scraper API. Product researchers often stack these beside broader data extraction software RFP tracks on G2 Web Scraping or Capterra web scraping listings.

- Cloud marketplace actors package Chromium automation with quotas—browse Apify Google News scraper (lhotanova), Apify scrapeio Google News headlines, or Apify vnx0 Google News actor for alternate input schemas spanning keyword search and topic feeds documented alongside official Apify platform docs.

- Hosted visual scrapers target analysts who drag selectors—reference Octoparse Google News template, Octoparse scraping Google News walkthrough, and ParseHub news scraping guide when evaluating low-code fleets that still invoice per seat or task minute.

- Desktop structured export keeps news.google.com inside a local Chromium session: import Google News Keyword Scraper from the templates directory, iterate Structured Export rows mapped to

google-news-keyword.csv, and store the blueprint beside compliance evidence.

Reality check: every lane eventually pays for parser upkeep—sometimes through subscription credits, sometimes through midnight selector diffs inside UScraper graphs.

Companion reads on RSS URL formats versus DOM capture appear across ScrapingBee’s Google News write-up, Scrapfly’s API alternatives guide, and Oxylabs scraping Google News blog—bookmark them beside pygooglenews on GitHub whenever engineering wants cross-check implementations.

Decision matrix

Compare price, hosting, code, output, and upkeep honestly

Treat the grid as conversation scaffolding—not a warranty about your SKU or locale.

| Criterion | Hosted SERP API (Google News lanes) | Cloud marketplace actor | Hosted visual SaaS template | UScraper + Google News Keyword export |

|---|---|---|---|---|

| Typical hosting | Vendor API edge + egress | Apify-managed runners | Cloud designer + connectors | Local Windows Chromium sessions |

| Code required | HTTP client plus auth secrets | Mostly config + webhook glue | Wizard DSL plus recipes | Inspect Navigate / Structured Export JSON graphs |

| Pricing shape | Per-pull credits bundles | Marketplace minutes + proxies | SaaS tiers + concurrency | Desktop license plus .free template JSON imports |

| Output default | JSON payloads | CSV/JSON through actor dashboards | Hosted CSV pushes | google-news-keyword.csv Structured Export rows |

| Maintenance story | Vendor absorbs parser deltas | Actor authors redeploy tweaks | Vendor template updates surface first | You remap selectors after DOM churn |

| Data residency | Rows traverse vendor fleets | Stored run logs in cloud | Hosted runs + exports land in vendor accounts | Disk paths you nominate offline |

Orchestration fans might pair scheduled Outscraper Google News Zapier glue with alerting stacks whenever finance already funds automation seats. Prefer Google News Keyword Scraper when subscription meters feel outsized versus a modest stakeholder CSV, procurement wants block-level transcripts, or you need scraper vs crawler transparency auditors can photocopy during documentation scraper reviews.

Custody narrative

Why teams still bounce between SERP scraping and desktop CSV lineage

Hosted stacks promise deterministic payloads engineered for retries, backoff, geo targeting, headless web scraper ergonomics minus internal SRE hires. Analysts juggling SEO monitoring, editorial boards, crisis comms, or commercial intelligence clusters rightly pilot SerpApi, Bright Data, Oxylabs, ScrapingBee, Scrapfly, or curated Octoparse exports before signing another PO.

Yet many Windows-first desks only need repeatable titles, timestamps, outbound article URLs, and thumbnail proofs zipped into spreadsheets without routing IP through unfamiliar jurisdictions. google_news_keyword_scraper_export.json—the authoritative workflow artifact—shows Structured Export columns (Title, Time, Author, Link, Image) bound to c-wiz rows alongside Inject JavaScript guards, Scroll hydration, Sleep pacing tuned for lazy rendering, Element Exists loop exits, and an End node that cleanly terminates branching. Screenshots stale fast; diff the JSON after Google experiments with layout.

Pick your lane

Which archetype clears your job-to-be-done

Goal: defensible spreadsheets compliance can stash beside legal memos referencing Google News Publisher Center help context plus live news.google.com verification.

Stick with Google News Keyword Scraper when append semantics, offline custody, screenshot-friendly QA, scraper classification dossiers, or finance pushback against another hosted meter dominate the sprint. Extend the story through Google News Keyword export how-to for click-path parity with production JSON.

Operational compass

Keep Google News exports proportional and explainable

Next clicks

Where to deepen this cluster on UScraper

Run the sibling walkthrough

Pair this comparison with google-news-keyword-scraper-export-how-to so narration matches google_news_keyword_scraper_export.json block order.

Harvest more templates

Explore additional templates covering engines, SERP exports, directories, then stack internal links referencing blog articles for topical authority.

Bench hosted APIs honestly

Re-read Scrapfly Google News primer and ScrapingBee DOM vs RSS guide before locking architecture—both outline failure modes mirrored in Oxylabs thought leadership around scraping google news.

Prefer Google News Keyword Scraper when local CSV, Windows custody, block-graph QA, inspectable pacing, predictable desktop licensing, or documentation scraper audits matter more than another metered API line item. Prefer hosted SERP APIs when fleet concurrency, managed proxies, SLA posture, and JSON-first contracts dominate the roadmap.

FAQ

Frequently asked questions

Apify publishes multiple Google News actors—see lhotanova’s keyword-focused scraper, scrapeio’s headline extractor, and vnx0’s richer actor—that bill against cloud quotas while exposing JSON/CSV egress. Hosted SERP vendors such as SerpApi, Bright Data Google News SERP API, and Oxylabs News Scraper API monetize deterministic HTTP responses. Octoparse and ParseHub prioritize drag-and-drop cloud runs. UScraper executes Google News Keyword Scraper JSON locally, emits google-news-keyword.csv, and keeps Navigate, Structured Export, Scroll, Inject JavaScript, and Element Exists receipts on workstations you administer.