Picking a Yahoo Search export path is really a decision about where the browser runs, how you want to pay, and whether you need API-grade contracts or a CSV this afternoon. This comparison walks hosted SERP APIs, cloud marketplace actors, hosted no-code templates, and local desktop structured export—including where UScraper’s Yahoo Search Export template is the honest fit (and where it is not).

Landscape

Who shows up when you need Yahoo Search rows in a file

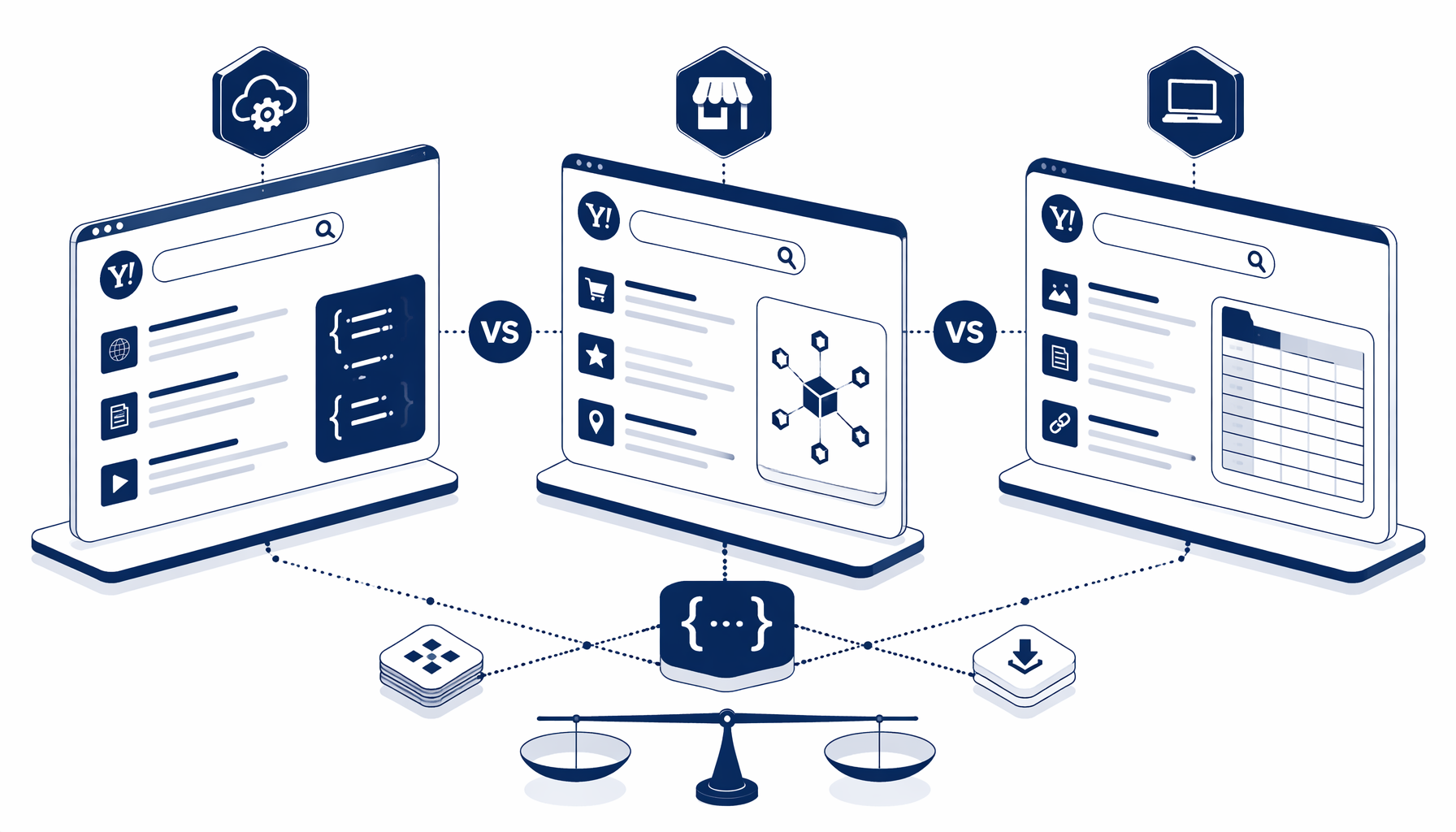

Most teams encounter four families of tools—each solves a different scale and paperwork profile:

- Hosted SERP APIs — SerpApi, Bright Data, DataForSEO, SearchAPI.io, and Oxylabs samples deliver JSON-ready SERPs with proxies factored in.

- Cloud marketplace actors — Apify’s Yahoo actor mirrors that model with platform billing; orchestration patterns echo Apify’s multi-engine repo.

- Hosted no-code — Octoparse’s Yahoo template plus French locale sibling favors shared cloud projects.

- Desktop structured export — UScraper consumes JSON, drives the visible DOM, and appends CSV; start at yahoo_search_export for Navigate ➜ query ➜ submit ➜ export ➜ pagination.

Roundups (Zapier on web scraping, Capterra, G2 SERP tooling) help triangulate categories—confirm pricing locally. Readers search scrape, extract, export, and collect interchangeably; scraper versus crawler is a scope hint because SERP jobs are narrow listing pulls, not full-site spiders. Lightweight instant data scraper extensions suffice for demos, but repeatable columns and pacing usually pull teams toward Octoparse Yahoo templates, Apify actors, or UScraper JSON.

Neutral read: marketing pages love the word unlimited; your practical limit is how often you can touch SERPs before quality degrades or accounts flag risk.

Decision matrix

Compare price, hosting, code, and CSV output

Use the table as a compass; your contract tier, coupon, and proxy mix will move the numbers.

| Criterion | Hosted SERP API | Cloud marketplace actor | Hosted no-code template | UScraper + Yahoo Search Export |

|---|---|---|---|---|

| Typical hosting | Vendor API edge | Apify-style cloud | Vendor cloud | Your Windows PC |

| Code required | Integration code | Low—API + config | Low—visual designer | Low—block graph + JSON import |

| Pricing shape | Per-query or bundles | Usage + platform | Tiered SaaS | Desktop license; template free in library |

| Output fit | JSON / partial HTML | JSON/CSV via actor | Cloud CSV exports | Structured CSV you name (default sample uses bing.csv) |

| Selector drift | Abstracted by vendor | Medium | Medium | You edit selectors locally |

| Data residency | Vendor processors | Vendor processors | Vendor processors | Rows stay on paths you pick |

When cloud wins: always-on monitoring, multi-region SERP fleets, or teams that already budget for proxy spend. When local wins: one-off research packs, finance teams resisting another per-query line item.

Pick your lane

Which approach matches common jobs-to-be-done

Goal: defensible title / snippet / URL / favicon rows without another SaaS trial.

Import yahoo_search_export when you want inspectable sleeps, Structured Export rows, .sw_next guards you can cite in QA reviews; spot-check SERPs immediately because layouts change silently.

Inside the template JSON

What the Yahoo Search Export bundle encodes (and why it mentions Bing-style selectors)

Treat the bundled exported project JSON as the authoritative contract rather than screenshots. The graphed automation is Navigate, Sleep, Type Text into #sb_form_q, Inject JavaScript to submit the form, another Sleep, Structured Export on .b_algo rows with Title (h2 text), Description (.b_caption), Favicon (.rms_img src), Website (.tptt), Attribution (.b_attribution), and Date (.news_dt), capped by Element Exists on .sw_next so clicks continue only while a pagination control survives.

Exports often ship with bing.csv defaults because Bing-style scaffolding dominates the SERP DOM in many locales—rename if it confuses finance. Transparent JSON from yahoo_search_export trades hidden SaaS breakage for upkeep you assign in DevTools (r.search.yahoo.com redirects, hungry favicons) using yahoo-search-export-how-to as the operational companion.

Pull the blueprint

Diff yahoo_search_export JSON against prototypes so QA sees identical blocks—not tribal knowledge.

Tune selectors offline

Reconfirm .b_algo plus child paths in your Yahoo locale prior to aggregates; lengthen sleeps whenever lazy captions blank out.

Operationalize redirects

Keep r.search.yahoo.com hops for lineage or unwrap after export; finalize pacing guidance with yahoo-search-export-how-to.

Related reading

Where to go next on UScraper

- Walk the execution path in yahoo-search-export-how-to—it matches the JSON graph this article decodes.

- Explore adjacent scrapers in the template library and scan the blog index for more Windows-first comparisons and tutorials.

If local custody, CSV-first output, and inspectable workflows outweigh another subscription ledger, UScraper’s Yahoo Search Export template holds the pragmatic middle lane—migrate off Octoparse or Apify experiments by reproducing sleeps and selectors visibly, escalated to sanctioned APIs when counsel mandates them.

FAQ

Frequently asked questions

Hosted SERP APIs return parsed JSON or HTML fragments you bill per query; marketplace actors such as Apify’s Yahoo scraper run headless browsers in vendor clouds with usage-based pricing; desktop structured export with UScraper renders pages on your Windows PC and writes append-friendly CSV locally. Pick APIs for contractual stability, actors for managed scale, and local flows when custody and predictable desktop cost matter more than multi-region concurrency—starting from yahoo_search_export when you want inspectable JSON blocks.