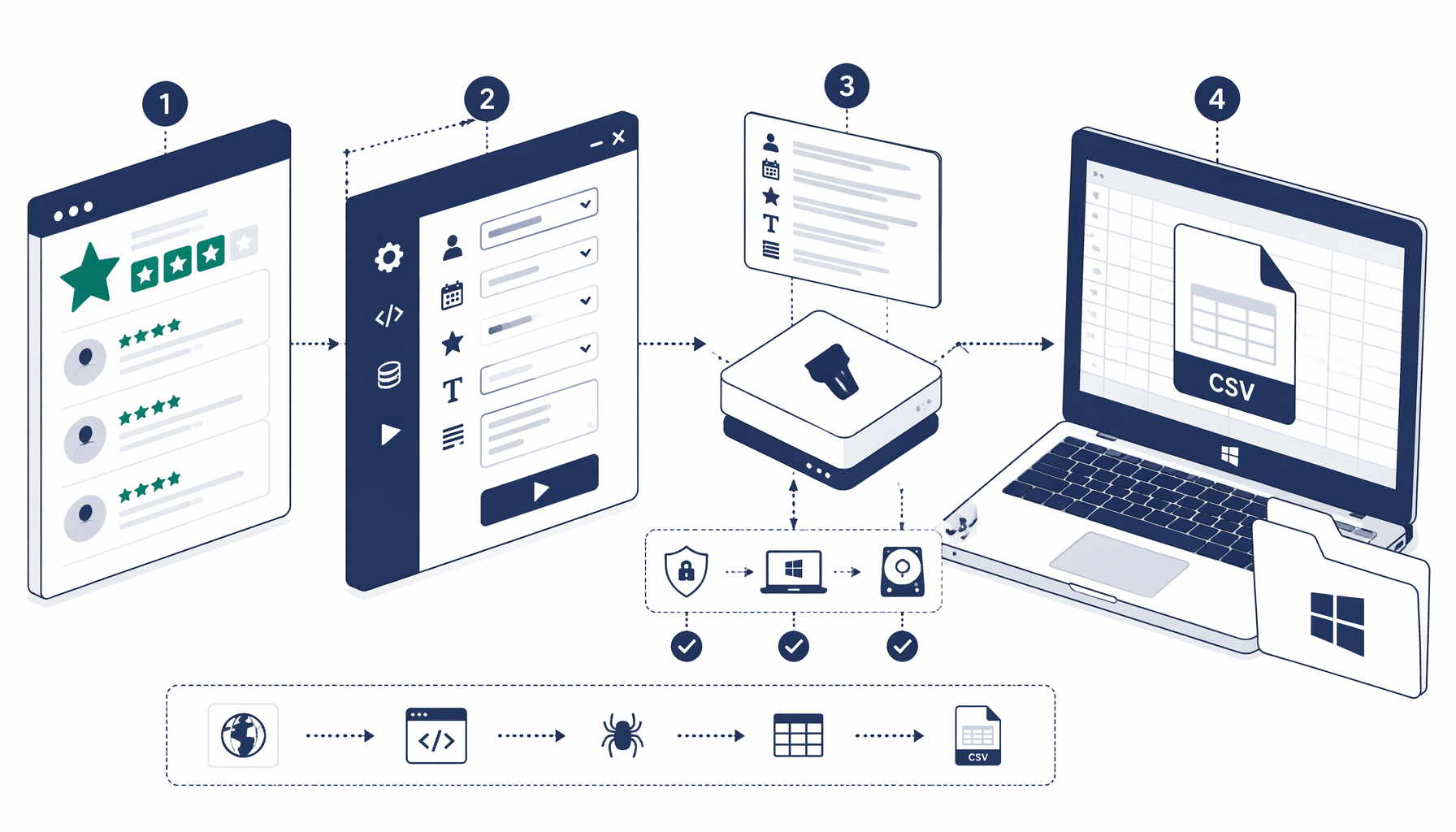

Marketing and CX teams often need Trustpilot reviews in CSV—reviewer names, timestamps, headlines, verbatim bodies, and star ratings—without standing up API credentials on day one. This tutorial explains when desktop structured export wins, how the published trustpilot_export graph paginates, and how to validate rows before they enter BI tools. Need the fastest path? Open Trustpilot export — template library entry, import trustpilot_export.json, point Navigate at a profile you are allowed to study, and tune selectors after every Trustpilot redesign.

Before you start

Prerequisites, compliance context, and who this helps

Assume you can responsibly load the Trustpilot business profile you intend to analyze in Chromium, store an append-friendly .csv, and revisit selector maintenance whenever Trustpilot ships a visual refresh. This guide fits reputation analysts, product marketers tracking release sentiment, CX leads closing the loop on complaints, and researchers building internal briefing packs—all operating under counsel-approved use of third-party content.

Pair hands-on automation with Trustpilot's own policy layers: review the Trustpilot developer hub, authentication overview, business units API, and service reviews API when your organization can onboard official credentials. Consumer-facing rules live in Trustpilot's terms of use for consumers and terms for businesses—they matter even when data appears public in a browser.

Pick your lane

Desktop export, official APIs, or hosted scraping fleets

Best when stakeholders want spreadsheet-grade CSV, visual confirmation in DevTools, and custody that never leaves your disk unless you upload it afterward.

Anchor every run against the canonical download surfaced at https://uscraper.io/templates/trustpilot_export so block IDs (navigate-*, structured-export-*, element-exists-*, inject-javascript-*) remain aligned with changelog screenshots.

Trade-offs: Analysts—not a remote SRE team—must patch selectors whenever Trustpilot rotates typography classes (styles_consumerName__… fingerprints in bundled JSON illustrate how brittle hashed tokens can be).

Field map

Columns the Structured Export blueprint captures

The published workflow stores append-friendly batches in trustpilot.csv with headers emitted once and fileMode set to append so multi-page runs accumulate safely. Row scope targets Trustpilot review cards exposed as article[data-service-review-card-paper="true"]—revalidate in DevTools because attribute names and inner class tokens drift.

| Column | Selector intent | Operational notes |

|---|---|---|

| Reviewer | Consumer display name class (.styles_consumerName__… in the blueprint) | Pair with review headline hash if names collide or anonymize downstream for public reporting. |

| Time | Native <time> element text | Normalize relative phrases ("1 week ago") before mixing with warehouse timestamps. |

| Heading | Anchor carrying data-review-title-typography="true" | Captures the bold review title line users see in card layouts. |

| Description | Body typography class (CDS_Typography_body-l__… token in JSON) | Watch for multi-paragraph reviews—ensure selector spans the full body, not truncated teasers. |

| Rating | data-service-review-rating via row-scoped JavaScript | If the expression falls back to '5', confirm you are still bound to each card—global queries exaggerate scores. |

The graph adds Scroll and Inject JavaScript blocks so each loop loads additional DOM, clicks the Next pagination control (document.querySelector('a[name="pagination-button-next"]') in the sample), and returns to the Sleep node until an Element Exists probe sees a disabled next link (a[data-pagination-button-next-link="true"][aria-disabled="true"]).

Authoritative JSON sample

How the trustpilot_export.json graph is shaped

Treat the repository bundle as the source of truth: every block title, config.url, selector string, and connections edge must stay synchronized when you fork the workflow. The excerpt below mirrors the trustpilot_export blueprint that ships beside Trustpilot export — UScraper template downloads—trimmed for readability while preserving block types and Structured Export intent.

{

"version": "1.0.0",

"project": { "name": "Trustpilot", "description": null },

"blocks": [

{

"block_id": "navigate-1755811915181",

"block_type": "process",

"title": "Navigate",

"config": { "url": "https://example.com" }

},

{

"block_id": "structured-export-1755812016827",

"block_type": "process",

"title": "Structured Export",

"config": {

"fileName": "trustpilot.csv",

"rowSelector": "article[data-service-review-card-paper=\"true\"]",

"includeHeaders": true,

"fileMode": "append",

"columns": [

{ "name": "Reviewer", "selector": ".styles_consumerName__xKr9c", "attribute": "text" },

{ "name": "Time", "selector": "time", "attribute": "text" },

{ "name": "Heading", "selector": "a[data-review-title-typography=\"true\"]", "attribute": "text" },

{ "name": "Description", "selector": ".CDS_Typography_body-l__dd9b51", "attribute": "text" },

{

"name": "Rating",

"selector": "ROW?.querySelector('div[data-service-review-rating]')?.getAttribute('data-service-review-rating') || '5'",

"attribute": "alt",

"isJs": true

}

]

}

},

{

"block_id": "element-exists-1755812146416",

"block_type": "process",

"title": "Element Exists",

"config": {

"selector": "a[data-pagination-button-next-link=\"true\"][aria-disabled=\"true\"]"

}

},

{

"block_id": "inject-javascript-1755814228327",

"block_type": "process",

"title": "Inject JavaScript",

"config": {

"jsCode": "document.querySelector('a[name=\"pagination-button-next\"]').click()"

}

}

]

}

JSON is the contract: If marketing copy and the downloaded file disagree, trust the

connectionsarray in the fulltrustpilot_export.json—it encodes the pagination loop order your runtime actually executes.

Hands-on workflow

Run the Trustpilot export end-to-end

Import, validate, then widen the loop

Start from Trustpilot export template (trustpilot_export) so UUIDs and connector sides continue to match support screenshots.

Download and import the JSON graph

Pull trustpilot_export.json from https://uscraper.io/templates/trustpilot_export and load it verbatim—Navigate, Sleep, Element Exists, Structured Export, Scroll, and Inject JavaScript nodes depend on each other's ordering.

Point Navigate at the approved profile URL

Replace the placeholder host with the exact Trustpilot language variant you need; localized templates change heading density and pagination affordances.

Dry-run Structured Export on a single page

Temporarily break the loop (or stop after the first export) to confirm nonzero rows for Reviewer, Time, Heading, Description, and Rating before you scale runtime.

Restore pagination and monitor Element Exists branching

Re-enable edges so disabled Next buttons terminate cleanly while active buttons continue exporting—watch logs for flickering aria states Trustpilot uses during SPA transitions.

Append responsibly and snapshot filenames

Keep headers-once / append-on semantics for longitudinal studies, yet rotate filenames whenever you freeze evidence for regulators or detractor-response programs.

Dedupe downstream before NLP or dashboards

Hash Reviewer + Heading + substring(Description) in SQL or Sheets to suppress duplicates created by rerun overlaps or collapsed pagination states.

Validation

Validate CSV output against the live profile

Pivot tables should reconcile unique reviewers, star histograms, and recency buckets against what stakeholders manually scrolled in the Trustpilot UI. Sudden spikes in duplicate hashes usually mean pagination clicked faster than hydrated cards, while empty Heading columns often betray missing data-review-title-typography attributes on abbreviated cards.

Normalize Time values before longitudinal charts—mixed relative and absolute stamps confuse spreadsheet engines. If Rating sticks at five stars everywhere, revisit the blueprint's JavaScript expression: it deliberately falls back to '5' when attributes vanish, which masks binding bugs.

Community references such as Stack Overflow discussions on pandas exports, open-source parsers, or hands-on Beautiful Soup videos illustrate code-first workflows you can benchmark against—but desktop structured export still wins when policy teams insist on supervised, repeatable CSV snapshots.

Local desktop export vs hosted Trustpilot actors

| Dimension | UScraper Structured Export on Windows | Managed cloud lanes |

|---|---|---|

| Data custody | Rows land beside your project folders by default | Vendors route traffic via their infra (Apify, Bright Data, etc.) unless you self-host runners |

| Selector ownership | Analysts remap visually whenever Trustpilot changes DOM | Operators patch presets centrally—you still chase layout drift |

| Spend model | Fits desktop license budgeting alongside human QA | Pay for concurrency, proxies, and observability tiers |

| Best for | Auditable snapshots under strict residency | Burst workloads across thousands of profiles |

FAQ

Frequently asked questions

Trustpilot publishes consumer and business terms that govern how people may use the platform, and separate Trustpilot for Business API programs govern authenticated data access. Publicly visible review pages can still be restricted by contract, copyright in user-generated text, and regional privacy rules. Many teams limit exports to profiles they operate, throttle automation, keep derivatives internal, and obtain legal review before commercial reuse. Read Trustpilot's current consumer terms, business terms, and developer documentation alongside counsel before large-scale collection.

Related links and next steps

- Download the Trustpilot export JSON blueprint—fastest path from article to runnable blocks.

- Explore all UScraper templates when you chain Trustpilot exports with other marketplace workflows.

- Browse the UScraper blog for adjacent Windows-first tutorials and comparison clusters.

Trustpilot will keep evolving its card layout—treat this guide as an operational framework: import the graph, prove selectors on one page, re-open the loop, and validate CSV totals every time marketing ships a new profile skin.