Picking an HTML web scraper is really a choice of runtime (their cloud versus your Windows desktop), pricing model (subscription and usage tiers versus a local app license), and how much code you want between “see page” and “see rows.” This piece compares hosted visual tools, marketplace actors such as Apify Web Scraper, open-source crawling stacks, and UScraper’s block-based JSON import—with an honest look at trade-offs before you optimize for throughput you may never need.

Landscape

Who shows up when you search for HTML scraping software

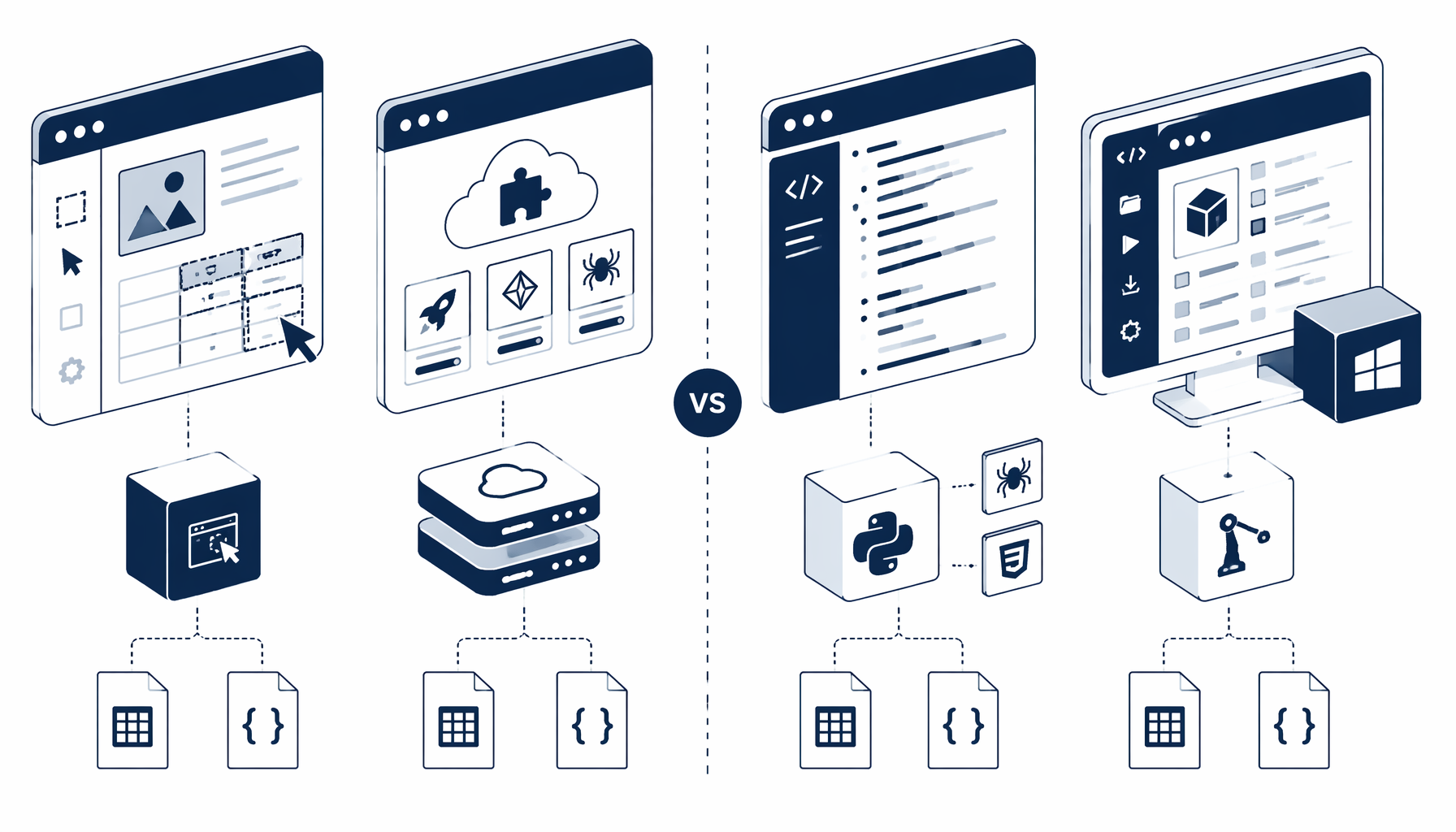

Most serious options fall into four buckets—each honest about what it optimizes:

- Hosted visual scrapers (Octoparse, ParseHub, peers) — Point-and-click flows, documentation hubs, and pricing tuned for recurring seats; strong when collaborators want shared projects more than a Windows-only binary. Product sites and help centers such as Octoparse, Octoparse HTML scraping techniques, ParseHub, and ParseHub documentation spell out how they think about maintenance and training.

- Cloud marketplaces (Apify Store and similar) — Prebuilt actors like the Apify Web Scraper run on vendor infrastructure with docs such as the Apify Academy Web Scraper tutorial. Great when you want someone else to operate headless fleets and handle scaling knobs.

- Frameworks and hand-written extractors (Scrapy, Playwright, Beautiful Soup) — Total control and testability; selector discipline follows references like Scrapy selectors and MDN querySelector. Best when engineering owns long-term selector debt.

- Desktop block automation with JSON import (UScraper) — Import a workflow, render pages locally, wire Navigate → wait → extract steps, and keep outputs on disk—suited to analysts who live in spreadsheets. Start from templates/html_web_scraper when that profile matches yours.

Directories such as Capterra web scraping software and the G2 data extraction grid help triangulate reviews; always validate claims on your own target pages.

Neutral read: marketed ease scores do not survive your site’s bot layer, localization, or sudden DOM refactors—budget time for selector rehearsal, not only vendor demos.

Decision criteria

Compare on price, hosting, code depth, and output

Use the matrix as a shorthand; renewals, coupons, and proxy add-ons move real numbers monthly.

| Criterion | Hosted visual SaaS | Cloud marketplace actor | Scripted stack (Scrapy / Playwright) | UScraper + HTML template |

|---|---|---|---|---|

| Typical hosting | Vendor cloud projects | Vendor cloud workers | Your servers / CI | Your Windows PC |

| Code required | Low—visual designer | Low—JSON config & API calls | High—repos, tests, deploy | Low—graph blocks + JSON import |

| Pricing shape | Per-seat / run tiers (Octoparse pricing, ParseHub pricing) | Usage + subscription (Apify platform) | Labor + infra | Desktop license; template listing free in library |

| Output fit | Cloud CSV / exports | JSON, datasets, downstream webhooks | Anything you script | Local files—HTML fragments or chained export steps |

| Selector drift | You fix in hosted UI | You fix via actor config | You patch in Git | You patch blocks locally |

| Data residency | Vendor processors | Vendor processors | Your policy | Stays on hardware you control |

For massive parallel crawls and managed anti-bot routes, cloud marketplaces remain hard to beat. For moderate pulls where finance dislikes another metered line item, a local JSON graph centered on html_web_scraper is often simpler to expense once and operate for months.

Pick your lane

Which approach matches common jobs-to-be-done

Goal: capture stable HTML snippets or intermediate text for spreadsheets without standing up Kubernetes.

- Favor local execution when security reviews disallow another SaaS subprocessors list.

- Start from https://uscraper.io/templates/html_web_scraper to mirror a Navigate → Sleep → Extract HTML recipe you can tweak in minutes, then combine with spreadsheet cleanup.

Platform depth

Why marketplace actors and visual SaaS both sit on top of HTML

Whether the UI is nodes on a canvas or YAML in a repo, the core task is the same: fetch, wait for meaningful paint, select nodes, serialize. WHATWG’s DOM fundamentals do not care which logo ships the runner. The difference is who operates headless browsers, queues, and egress bills.

Inside the bundle

What the html_web_scraper JSON encodes (and why it matters)

No vendor CSV sample ships in the bundle—treat the exported project JSON as the contract. The published shape chains Navigate to your starting URL, Sleep to allow render, and Extract HTML with fields such as selector, innerHtml, and multiple so you can lift a DOM subtree or full page HTML intentionally. That transparency answers the comparison question directly: the HTML template is for teams that want inspectable blocks on local disks, not an opaque monthly quota.

Download the JSON blueprint

Pull the workflow from html_web_scraper and read block order before judging parity with cloud actors.

Tune waits and selectors

Match selector strings to live DevTools; adjust Sleep when SPAs hydrate late—same chore as hosted tools, different console surface.

Decide downstream shape

Raw HTML fragments may feed parsers, readability tools, or a follow-on structured export step—plan the handoff before you parallelize runs.

Relink to the template library

When you need adjacent recipes, browse UScraper templates and bookmark the blog index for comparisons and tutorials.

Related reading

Where to go next on UScraper

- Pair this comparison with hands-on guides in https://uscraper.io/blog once you choose a lane.

- When your decision matrix rewards Windows-local execution, visual block editing, and template JSON you can diff in Git, import https://uscraper.io/templates/html_web_scraper and iterate there first—knowing massive distributed crawl problems may still point you to Apify Store or a code-first crawler later.

FAQ

Frequently asked questions

HTML scraping extracts structure or text after a document is fetched and parsed—often CSS selectors or XPath against a DOM, as MDN covers for querySelector. Full platforms add scheduling, proxies, collaborative projects, or distributed workers. Many teams start with extraction only and grow into platforms when concurrency, compliance workflows, or team permissions—not raw selector syntax—become the bottleneck.